Disambiguation output

The text to be analyzed

The text intelligence engine workflow is as follows:

- The input text is analyzed by the disambiguator using its resources (algorithms and data structures, among which the knowledge graph plays a crucial role).

- The disambiguator returns the disambiguation string.

- Categorization and extraction rules are applied to the disambiguation string.

To keep it simple, the disambiguation string can be described as an enriched version of the original input text, in which the text itself is divided into a series of textual blocks, each of which is tagged with many attributes (positional, morphological, grammatical, logical, syntactical, semantic, etc.) which have been determined during the analysis.

These textual blocks are organized in a hierarchy so that each one of them features some attributes of other blocks and is linked to them by different types of relationships.

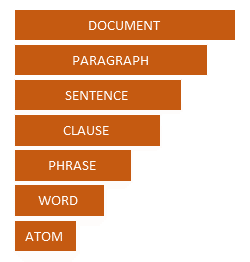

From top to bottom, the textual blocks produced by the disambiguator are:

- Document

- Paragraph

- Sentence

- Clause

- Phrase

- Word

- Atom

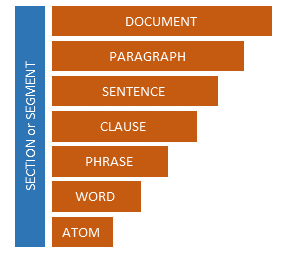

Two more levels can be optionally added to this model if sections or segments have been defined for the input documents. Sections and segments are two custom text subdivisions that can modify the text analysis model, as seen in the following image.

Document represents the whole input text.

Sections, being optional subdivisions, may or may not be present in the input document. If they are present, the disambiguator will recognize them and will place the text blocks inside them according to their position.

Segments are also optional subdivisions which can be defined in the input document (static segments) or be dynamically built after the disambiguation and before the rules are applied. A segment is always a part of a section, therefore its boundaries can never cross a section's boundaries.

A paragraph is a unit of discourse consisting of one or more sentences dealing with a particular idea. Its start is typically indicated by a full stop and the beginning of a new line. A paragraph is always a part of a section, therefore its boundaries can never cross a section's boundaries.

A sentence is a portion of text consisting of one or several words, which are linked by a syntactic relation and are able to convey meaning. Its start is typically indicated by a punctuation mark such as a period, question mark or exclamation mark.

A clause consists of one or several words within a sentence representing the smallest grammatical unit that can express a complete proposition. It consists of at least a predicate (a phrase containing a verb) and possibly other phrases which have the role of subject, direct object and other complements. No clause is recognized if no verb is present. Clauses might consist of non-contiguous text parts. In the sentence:

The dog that was barking run into the street

There are two clauses: The dog run into the street and that was barking.

A phrase consists of one or several words that are sort of constituents of the sentence and act as single units in its syntax. Common linguistics recognizes noun phrases, verb phrases, etc. Phrases have a logical role in a clause: subject, direct object and other complements. For example, in the sentence:

John's dog ate a big bone

the phrases are: dog (subject), John's (possessive case), ate (verb predicate), a big bone (direct object).

A word is a portion of text corresponding to concepts, collocations, entities, idioms and also punctuation marks recognized during the semantic analysis. Example of words are: dog, the, credit card, triumph, Miami, !, 234 5th Avenue, a million and a half.

Atom indicates a portion of text that cannot be further divided, that is to say a word or a punctuation mark, without considering its possible semantic value. Therefore, atoms can coincide with words or be the constituents of a word. The latter is the case of collocations, idioms and entities, which are composed of several atoms. In fact, these three textual elements posses a semantic value which is stronger than the value of each atom considered separately.

To have a clearer picture of how the subdivisions described above are applied to text, let's observe the following sample sentence:

The goldfinches that usually spend the winter in Central Europe have invaded Italy because of the unusual cold.

The sentence can be divided into two clauses:

- The goldfinches have invaded Italy because of the unusual cold (independent)

- that usually spend the winter in Central Europe (relative)

The elements constituting the independent clause are not contiguous since the relative one splits the independent clause into two portions.

Eight phrases can be recognized, four for each clause:

-

Clause #1:

- The goldfinches

- have invaded

- Italy

- because of the unusual cold

-

Clause #2:

- that

- usually spend

- the winter

- in Central Europe

One word (because of) is a collocation consisting of two atoms (because and of).

Tokens

Generally speaking, a token is a string of characters grouped together during a process called tokenization.

This process consists of breaking up a stream of text into meaningful elements called tokens. expert.ai technology considers a token to be a word, or more precisely, a portion of text that cannot be further parsed.

In the process of developing linguistic projects, textual tokens can be searched, matched and processed by means of attributes within the linguistic rules.